WebSight is a containerized Content Management System with a native integration to StreamX digital experience service mesh.

It offers consistent app performance, easy scaling, improved security, and fast updates along with enterprise-level development and authoring experience.

Combining what’s best about proven, battle-tested solutions with zero-legacy development experience.

Learn more

Built with focus on clear architecture and scalability to support distributed Kubernetes deployments.

Learn more

The only CMS working natively with the event-driven architecture of StreamX.

Learn more

Keep working with:

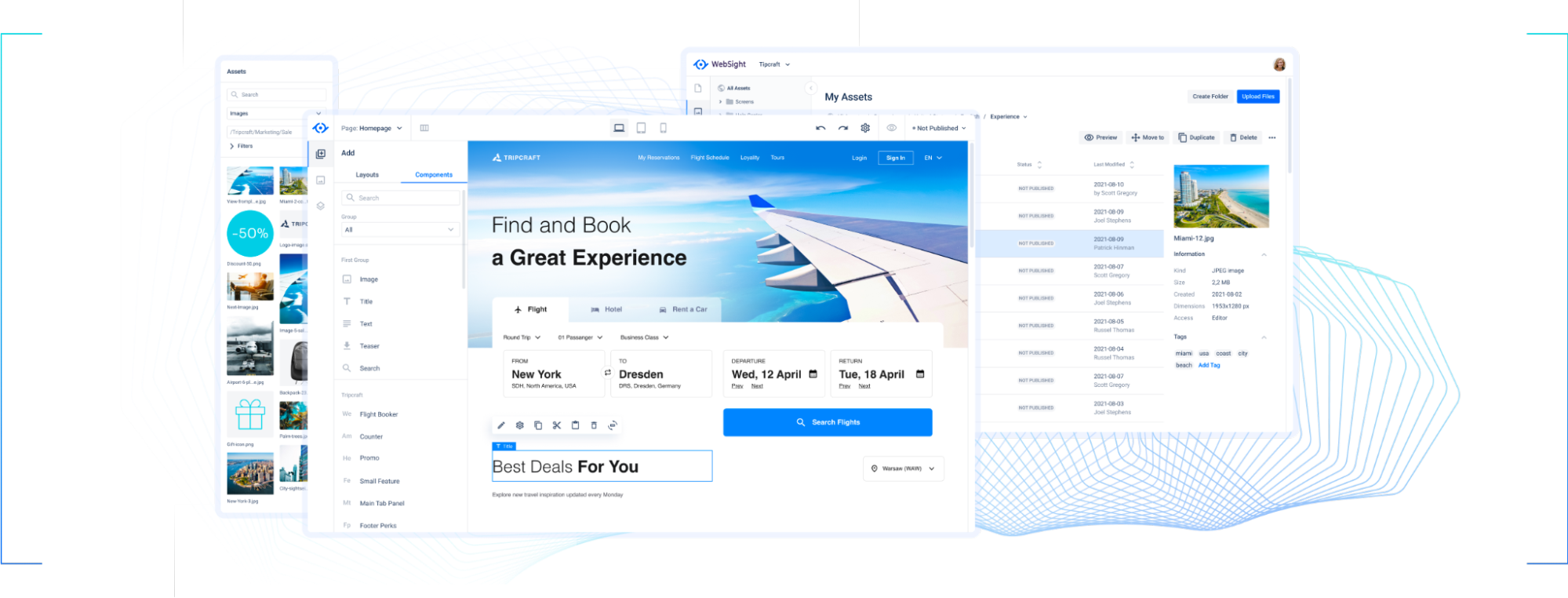

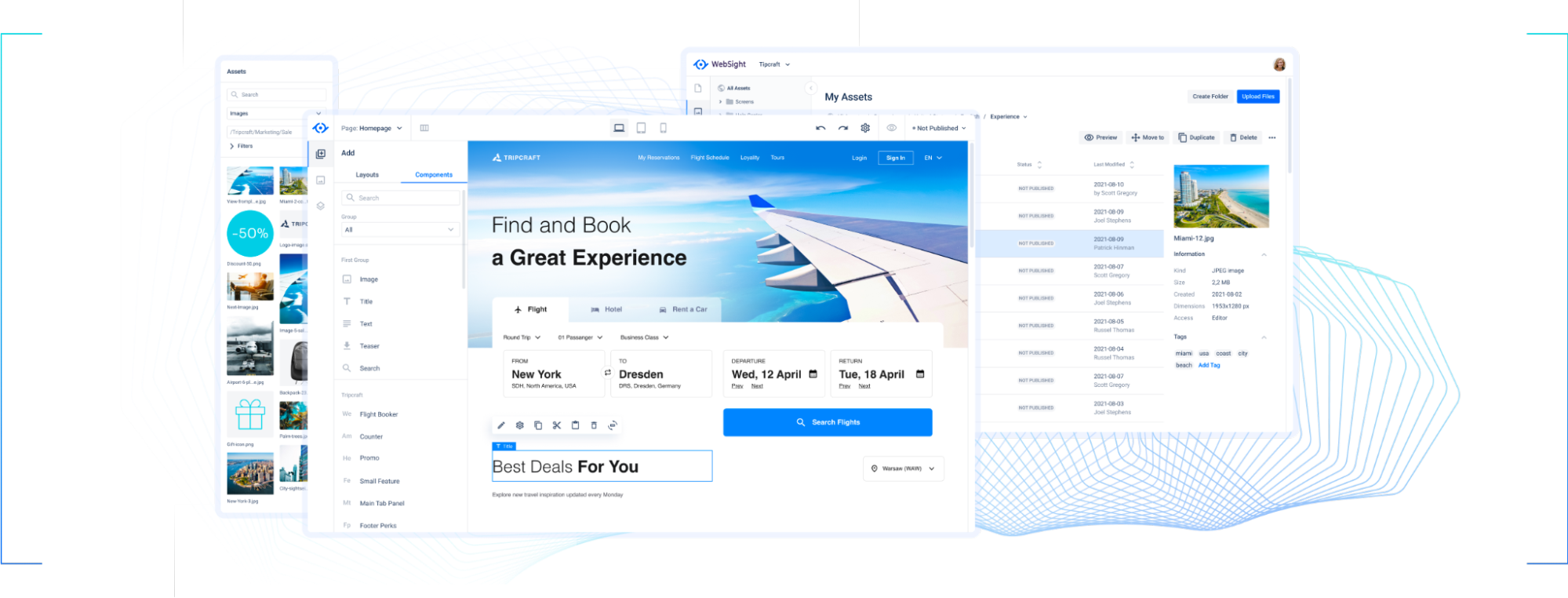

WYSIWYG editor

Organized page hierarchy

Pre-defined component layouts and libraries

Asset management

Version control

While getting to play with:

Side panel for easy component adjustments

Inline editing for text components

Real-time preview

Keep working with:

Maven archetype for project setup

Local development with Java, Maven, Sling, HTL, and OSGi

Concepts of Pages, Assets, Components, Dialogs, and Templates

Tools like Apache Felix Web Console, Resource Browser, Groovy Console, and User Management

While getting to play with:

CMS built into a Docker image for consistent versions, quick deployments, and easy rollbacks

container orchestration services like any Kubernetes cluster, Docker Swarm, or Amazon ECS

unified Author and Publish environment